Let’s get the famous quote out of the way: Geoffrey Hinton, “the Godfather of AI,” said nine years ago that “People should stop training radiologists now.”

Even though AI adoption in radiology is rapidly growing, Radiologists are still in high demand. So if AI isn’t replacing radiologists even though it’s becoming increasingly adopted and integrated — what is its role?

And more importantly: What should its role be?

What is Clinical Decision Support?

Clinical Decision Support Systems, or CDSS, refers to digital tools that assist healthcare professionals in making informed, confident choices. Importantly with CDSS, the final decision always remains with the clinician.

How Clinical Decision Support Systems Work in Practice

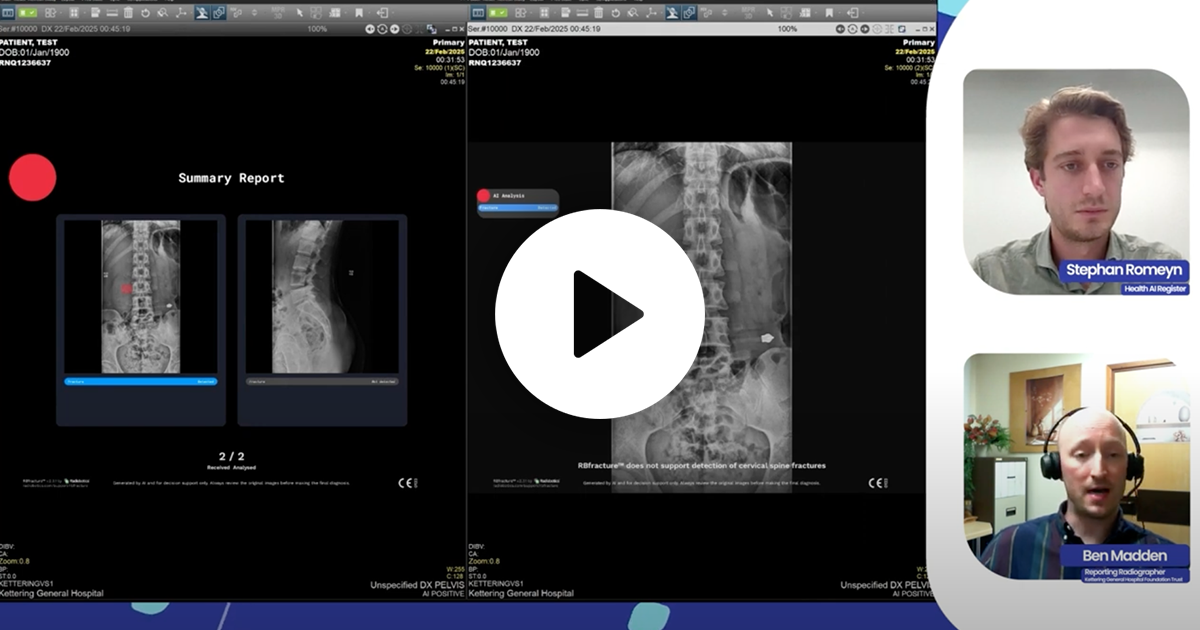

For AI tools like RBfracture™, decision support means operating on two fronts:

- Reducing missed fractures by identifying subtle or unexpected findings.

- Reinforcing negative findings by offering clinicians the confidence to move forward quickly when there are no findings.

In both cases, the AI acts as a second set of eyes, providing structured, consistent feedback that clinicians can verify, interpret, and act upon.

If AI is So Accurate, Why Not Decision-Making?

AI does read better than a human (in most cases). In externally validated studies, we almost always find that AI out performs readers of all levels, and that readers using AI outperform readers without AI.

But handing over final diagnostic authority to AI would not only be premature — it risks eroding trust in clinical judgment. Medical decisions rely on more than pattern recognition; they require understanding of context, history, and ethics.

CDSS frameworks respect that complexity. They ensure that AI complements human decision-making, enhancing safety, consistency, and efficiency without compromising accountability.

What Should AI’s Role in Decision Making Be?

I expect that one day, AI will be decision making and not decision support. Therefore, I think a conversation about what AI’s role should be is more relevant and interesting.

It’s not just the complexity of handing over a high-stakes decision to AI. It’s the idea that “AI should do the laundry so that I can do art.”

We see AI regularly “doing art” for consumer-grade uses: ChatGPT can write poetry, Sora is generating more realistic looking videos, etc. And I imagine you use these consumer-grade AI tools for all sorts of life maintenance “laundry”: Administrative chores, information filtering and summarization, etc.

In medical institutions, however, the “laundry” takes a more systemic form: Confirming positive findings and marking obvious negative findings for discharge.

Balancing the Human Experience with Decision Support

The biggest issues in healthcare today are supply (staffing, beds, clinicians, infrastructure) and demand (an aging population that is living longer).

Imagine: If AI can do a clinician’s “laundry”, then they can be free to attend to nuanced cases or simply provide better patient care.

This article was originally published in RAD Magazine, the the voice of medical imaging and clinical oncology. You can read the original article at RAD Magazine.